When you build an application on a microservices architecture, it is broken down into independent services with their own data models that are independent of the development or deployment. It is thus essential to use orchestrators to control functions for production readiness. The same is true with traditional applications that are split with containers. These systems are complex to manage and scale out. The orchestrator can support production-ready and scalable multi-container applications.

A cloud-native and containerized application can be deployed on Azure in several ways and no single perfect solution exists. This article will guide you in finding an approach that fits well with your team and your development requirements.

Azure Services

Let us understand what each Azure service can do for you:

| Azure Service | Functionality |

| Azure Container Apps | Build serverless microservices based on containers |

| Azure App Service | Provides fully managed hosting for deploying web applications including websites and web APIs using code or containers |

| Azure Container Instances (ACI) | A single pod of Hyper-V isolated containers is provided on demand. It is a lower level “building block” option compared to Container Apps. ACI containers do not provide scale, load balancing, and certificates |

| Azure Kubernetes Service | A fully managed Kubernetes option that gives direct access to the Kubernetes API and runs any Kubernetes workload |

| Azure Functions |

A serverless Functions-as-a-Service (FaaS) solution that is optimized to run event-driven applications using the functions programming model

|

| Azure Spring Cloud | Makes it easy to deploy Spring Boot microservice applications to Azure without any code changes |

| Azure Red Hat OpenShift | Jointly engineered, operated, and supported by Red Hat and Microsoft to provide an integrated product and support for running Kubernetes-powered OpenShift |

Azure Kubernetes Service (AKS) is one of the most popular services as it offers serverless Kubernetes, an integrated continuous integration and continuous delivery (CI/CD) experience, and enterprise-grade security and governance. With AKS, you can unite your development and operations teams on a single platform to rapidly build, deliver, and scale applications with confidence.

Here are some benefits of using AKS:

Service discovery and load balancing: Kubernetes can expose a container using the DNS name or using their own IP address. If traffic to a container is high, Kubernetes can load balance and distribute the network traffic so that the deployment is stable

Storage orchestration Kubernetes: allows you to automatically mount a storage system of your choice, such as local storage, public cloud providers, and more

Automated rollouts and rollbacks: You can describe the desired state for your deployed containers using Kubernetes, and it can change the actual state to the desired state at a controlled rate. For example, you can automate Kubernetes to create new containers for your deployment, remove existing containers, and adopt all their resources to the new container

Automatic bin packing: You provide Kubernetes with a cluster of nodes that it can use to run containerized tasks. You tell Kubernetes how much CPU and memory (RAM) each container needs. Kubernetes can fit containers onto your nodes to make the best use of your resources

Self-healing Kubernetes: Restarts containers that fail, replaces containers, kills containers that don’t respond to your user-defined health check, and doesn’t advertise them to clients until they are ready to serve.

Secret and configuration management: Kubernetes lets you store and manage sensitive information, such as passwords, OAuth tokens, and SSH keys. You can deploy and update secrets and application configuration without rebuilding your container images, and without exposing secrets in your stack configuration

AKS Implementation

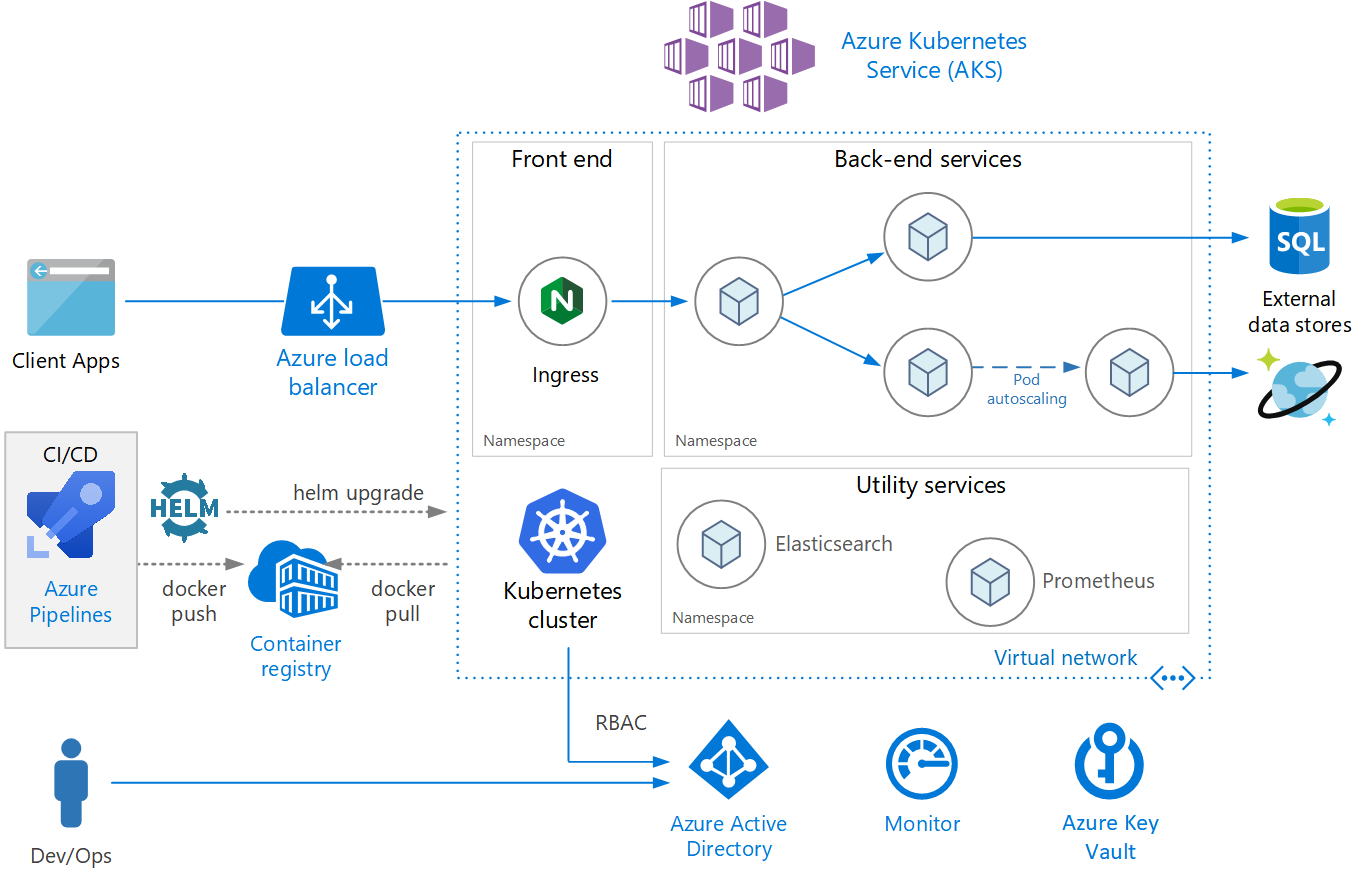

When deploying the microservices, AKS implementation follows a step-by-step procedure as showcased below:

Source: Microsoft Documentation

1. Create AKS cluster

Create an AKS cluster using the az aks create command

az aks get-credentials –resource-group myResourceGroup –name myAKSCluster

2. Create Container Registry

Azure Container Registry allows you to build, store, and manage container images and artifacts in a private registry for all types of container deployments. Use Azure container registries with your existing container development and deployment pipelines.

az acr create –resource-group myResourceGroup –name myContainerRegistry –sku Basic

3. Integrate Container Registry with AKS Cluster

Integrate an existing ACR with existing AKS clusters by supplying valid values for acr-name or acr-resource-id as below.

az aks update –name myAKSCluster –resource-group myResourceGroup –attach-acr myContainerRegistry

The kubectl command-line client is a versatile way to interact with a Kubernetes cluster, including managing multiple clusters. Login AKS cluster as below

az aks get-credentials –resource-group myResourceGroup –name myAKSCluster

4. Deploy microservices to AKS

The kubectl provides a command to apply the set of instructions to deploy the service. The instruction includes the service name, replicas, server configuration, environment variables, etc.

kubectl apply -f .\api-service.yaml

Until this step, we are ready with API running on the AKS cluster. We need to take additional steps to make this API production ready. That includes securing API using Gateways, Storing the Application secrets in a secure location using Key Vault, and scaling AKS cluster considering application Load.

5. Choosing a gateway technology

An API gateway sits between clients and services which acts as a reverse proxy and routes requests from clients to services. It may also perform various cross-cutting tasks such as authentication, SSL termination, and rate-limiting.

Here are a few ways to implement an API gateway in your application:

Reverse proxy server: Nginx and HAProxy are popular reverse proxy servers that support features such as load balancing, SSL, and layer 7 routings. They are both free, open-source products, with paid editions that provide additional features and support options

Service mesh ingress controller: If you are using a service mesh such as linkerd or Istio, consider the features that are provided by the ingress controller for that service mesh.

Azure Application Gateway: Application Gateway is a managed load balancing service that can perform layer-7 routing and SSL termination. It also provides a web application firewall (WAF).

Azure API Management: API Management is a turnkey solution for publishing APIs to external and internal customers. It provides features that are useful for managing a public-facing API, including rate limiting, IP restrictions, and authentication using Azure Active Directory or other identity providers.

Azure Key Vault Integration with AKS Cluster

The Azure Key Vault Provider for Secrets Store CSI Driver allows for the integration of an Azure key vault as a secret store with an Azure Kubernetes Service (AKS) cluster via a CSI volume.

It offers the following features:

Scaling AKS Cluster

At this stage, once all the components are connected, we must look for scaling the application to handle the load. To run your applications in AKS, you may need to increase or decrease the amount of compute resources. With this, the number of application instances changes, so you may also need to change the number of underlying Kubernetes nodes. For this, you can use the options given below:

Cluster Security

One of the effective ways to secure your AKS cluster is to secure access to the Kubernetes API server. To control this access, you need to integrate Kubernetes RBAC with Azure Active Directory (Azure AD). This secures AKS in the same way as to secure access to your Azure subscriptions.

Azure DevOps

Azure Pipelines can automatically be deployed to AKS and can be used to build, test, and deploy applications with continuous integration (CI) and continuous delivery (CD) with Azure DevOps.

To learn more about microservices orchestration using Azure Kubernetes Service, check out Apexon’s Cloud Platform and Engineering Services or get in touch directly using the form below.